Managed Memory Variables

About Managed Memory

The CUDA Unified Memory component defines a managed memory space that allows all GPUs and hosts to “see a single coherent memory image with a common address space,” as described in the NVIDIA documentation “Unified Memory Programming.”

Allocating a variable in managed memory avoids explicit memory transfers between host and GPUs, as any allocation created in the managed memory space is automatically migrated between the host and GPU.

A managed memory variable is marked with a "__managed__" memory space specifier.

How TotalView Displays Managed Variables

To make it easier to recognize and work with managed variables, TotalView annotates their address with the term “Managed”, and, for statically allocated variables, adds the @managed_global type qualifier.

Statically Allocated Managed Variables

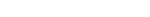

For example, consider this statically allocated managed variable, declared with the __managed__ keyword:

__device__ __managed__ int mv_int_initialized=10;

TotalView adds “Managed” in the Address field and decorates the type with @managed_global:

Dynamically Allocated Managed Variables

Managed memory can be dynamically allocated using the cudaMallocManaged() function, for example:

cudaMallocManaged((void**)&(elm->name), sizeof(char) * (strlen("hello") + 1) );

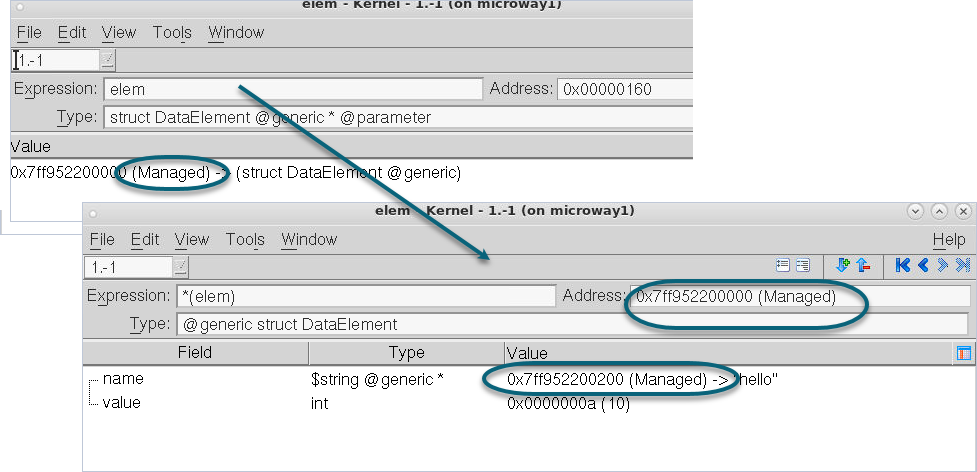

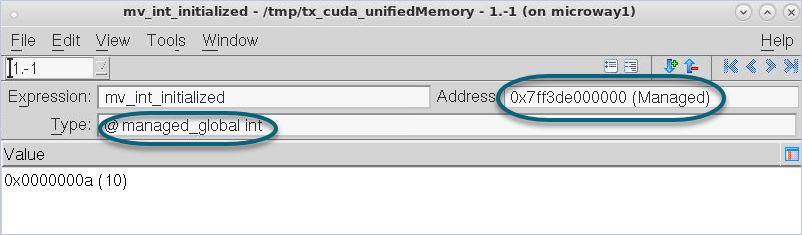

Here, the Stack Frame shows that the variable elem points into managed memory. That is, elem is a pointer and its value points into managed memory; the pointer’s value is annotated with "(Managed)".

Diving on it shows that the pointer’s value points into managed memory. Diving on the pointer itself annotates the Address value with “Managed”. Note that one of its members, name, also points into managed memory.